REPORT : ALOK SEMWAL

The days of faceless, unaccountable AI content on Indian social media may finally be numbered. Starting today, platforms like X (formerly Twitter), YouTube, Snapchat, and Facebook are legally bound to label every piece of AI-generated content — and if a deepfake surfaces on their platform, they have just three hours to pull it down.

This isn’t a warning or a guideline. It’s the law.

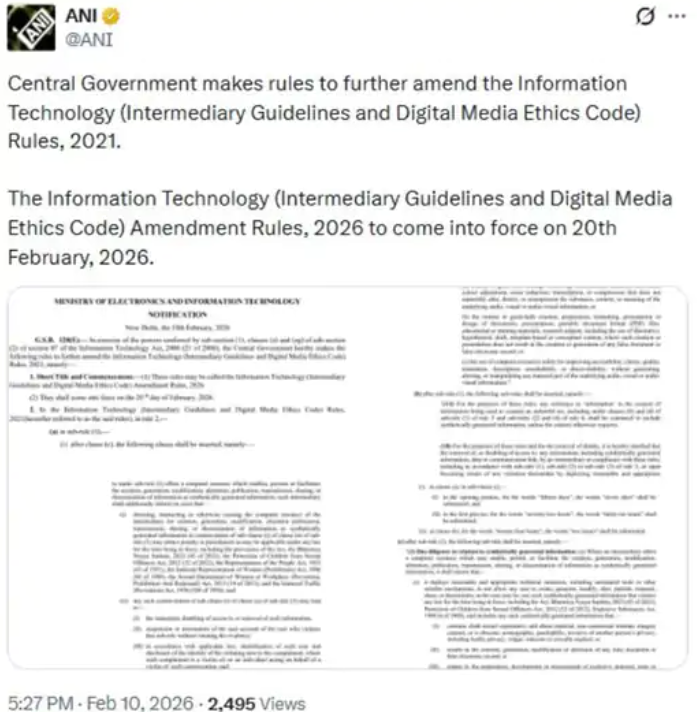

The Ministry of Electronics and Information Technology has pushed through amendments to the IT Rules 2021, backed by a formal notification issued on February 10. The message to Big Tech is clear — clean up your platforms, or face the consequences.

Why Now?

The timing is no accident. As AI tools have grown cheaper and more accessible, so has the ability to deceive. Doctored videos of politicians, fake audio clips, fabricated news — the internet has been flooded with content that looks real but isn’t. These new rules are a direct response to that growing threat, particularly around misinformation and the manipulation of public opinion during elections.

The Prime Minister himself drove the point home at a recent AI summit, drawing a simple but powerful analogy: just as a packet of biscuits tells you exactly what’s inside it, digital content should tell you whether it was made by a human or a machine. An “authenticity label,” he argued, is no longer optional — it’s a necessity.

What Exactly Do the New Rules Require?

Under Rule 3(3), any platform hosting AI-generated or “synthetically generated” content must attach a clear, prominent label to it — no fine print, no burying it in the corner. For video content, the label must cover at least 10% of the visual area. For audio, it must be heard within the first 10% of the clip’s duration.

Beyond the visible label, a permanent unique metadata identifier must be embedded into the content itself — something that cannot be edited, hidden, or deleted by anyone. Platforms will also need to build technical systems capable of flagging AI content before it even goes live.

Three other significant changes round out the new framework. First, once an AI label is applied, it stays — no platform can allow users to strip or mask it. Second, companies must deploy automated tools to catch and block AI-generated content that is illegal, obscene, or deliberately deceptive. Third, every platform must remind its users at least once every three months that misusing AI or breaking these rules carries real consequences — fines, penalties, or worse.

Who Feels the Heat — Users or the Industry?

For everyday users, the shift is largely positive. Spotting a manipulated video or a fake audio clip will become far easier when there’s a mandatory marker telling you what you’re looking at. The spread of false information should, in theory, slow down.

For the platforms themselves, it’s a more complicated picture. Building the infrastructure to detect, tag, and monitor AI content at scale requires serious investment. Compliance won’t come cheap, and smaller players may struggle to keep up. That said, most industry observers agree the trade-off is worth it — the reputational and legal cost of being a haven for deepfakes is far steeper in the long run.

The Bigger Picture

The Ministry framed these changes as part of a broader mission to create an internet that is “open, safe, trusted, and accountable.” Those aren’t just bureaucratic buzzwords — they reflect a genuine reckoning with what generative AI has made possible, and how quickly things can spiral when there are no guardrails.

A quick note on deepfakes for the uninitiated: A deepfake is a digitally altered video or audio clip in which someone’s face, voice, or mannerisms are replaced or mimicked using AI. The technology has advanced to a point where even trained eyes can struggle to tell the difference between what’s real and what’s been manufactured in a software program.

India’s move puts it among a growing number of countries trying to get ahead of this problem — rather than scrambling to catch up after the damage is done.